Published May 06, 2026

Trust Without Disclosure: Why Zero-Knowledge Proofs Could Help Build Trust in AI Agents

We’re moving from systems that respond to our questions to AI agents that act on our behalf. In this new era, AI agents can help book travel, manage tasks, and coordinate across systems, with less human intervention at each step.

This creates a practical problem: How do we trust these agents? How do we verify what they are allowed to do, or what they have done, without exposing sensitive information?

Enter zero-knowledge proofs—a cryptographic technique that lets you prove you know something without revealing what you know. It sounds like a magic trick, and in many ways, it is. But unlike magic, the mathematics behind it are provably sound.

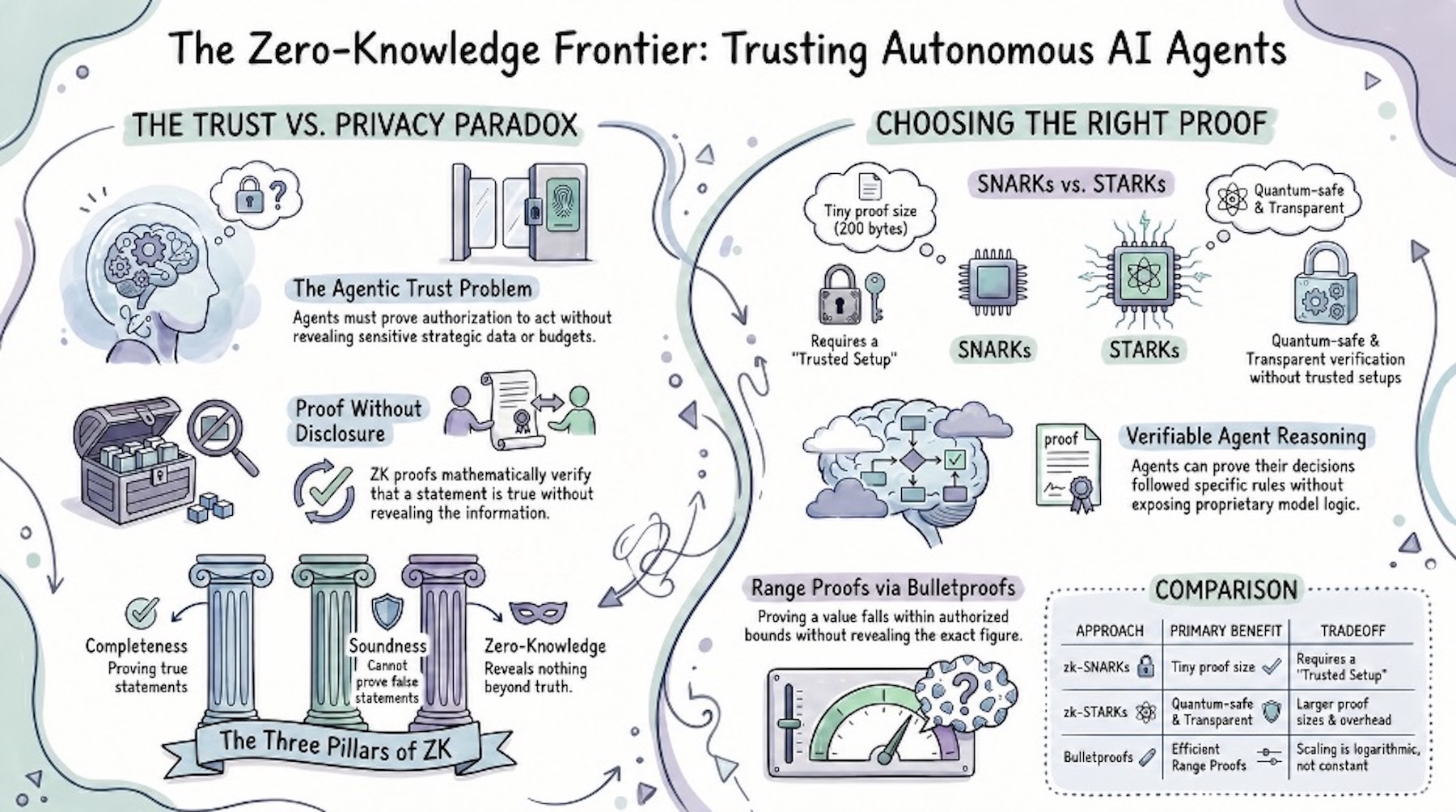

The Agentic AI Trust Problem

Consider what happens if your AI assistant negotiates a deal with a vendor’s AI agent. Your agent needs to prove it has authorization to spend within a certain threshold, but revealing your exact budget gives the vendor leverage. The vendor’s agent needs to verify the customer’s agent isn’t bluffing but doesn’t necessarily need to know their financial details.

Traditional approaches fail here. Revealing everything destroys negotiating leverage. Revealing nothing undermines trust. We need something in between: proof without disclosure.

This isn’t hypothetical. As organizations explore AI agents for more sensitive workflows across healthcare systems, financial platforms, and enterprise infrastructure, the question of how agents prove things to each other has become urgent.

Proving Without Showing: The ZK Paradigm

The classic illustration involves a cave with two paths, A and B, that meet at a magic door in the back. Peggy claims she knows the password to open the door. Victor wants proof, but Peggy refuses to reveal the password itself.

The protocol: Peggy enters the cave and randomly chooses a path. Victor, who can’t see which path she took, calls out which path he wants her to exit from. If Peggy knows the password, she can always comply—she either exits from the path she entered or uses the door to cross to the other side.

Each successful round cuts Victor’s doubt in half. After 20 rounds, there’s less than a one-in-a-million chance Peggy is faking it. Victor becomes statistically convinced Peggy knows the password—without ever learning what it is.

This captures the three essential properties of zero-knowledge proofs.

-

Completeness: if Peggy truly knows the password, she can always convince Victor.

-

Soundness: if Peggy doesn’t know the password, she can’t consistently fool Victor.

-

Zero-Knowledge: Victor learns nothing beyond the fact that Peggy knows the password.

From Theory to Agentic Reality

The cave example is interactive—it requires back-and-forth communication. Many modern ZK systems have evolved to support non-interactive proofs, where a prover generates a single proof that anyone can verify without further communication. This is essential for agentic AI, where agents may need to prove credentials asynchronously across different systems.

Three main approaches have emerged, each with distinct trade-offs:

zk-SNARKs: Compact but Trust-Dependent

Succinct Non-Interactive Arguments of Knowledge produce remarkably small proofs—around 200 bytes regardless of what’s being proven. Verification is fast, making them ideal for resource-constrained environments. The catch: they require a trusted setup ceremony. If this setup is compromised, fake proofs become possible.

The trusted setup challenge: SNARKs require a one-time ceremony where multiple parties jointly generate cryptographic parameters. The setup remains secure as long as a single participant acts honestly and destroys their contribution—only if every participant colluded to combine their secret inputs could proofs be forged. This 1-of-N security model is why ceremonies involve many independent participants, but the coordination required is operationally complex for agentic systems that need rapid, dynamic deployment. Newer “universal setup” approaches (like Plonk) reduce this burden but don’t eliminate it entirely.

zk-STARKs: Transparent and Post-Quantum Friendly

Scalable Transparent Arguments of Knowledge eliminate the trusted setup entirely. Everything needed to verify proofs is publicly derivable. They’re also built on hash functions rather than elliptic curves, making them more resistant to quantum computing attacks. The trade-off: larger proofs (tens to hundreds of kilobytes instead of a few hundred bytes) and more computational overhead, which can increase verification time and on-chain costs.

Bulletproofs: Efficient and Setup-Free

Bulletproofs require no trusted setup and are particularly well-suited for proving that a value falls within a certain range—without revealing the value itself. Proof size grows slowly relative to what’s being proven, keeping them practical even in constrained environments.

Performance Reality: Async Over Real-Time

Proof generation takes time—seconds to minutes depending on circuit complexity. Today, this often makes ZK proofs better suited for asynchronous workflows: pre-flight credential checks, batch audit generation, or background compliance verification. An agent negotiating a contract can generate proofs between message exchanges; an agent executing millisecond trades cannot. Hardware acceleration is closing this gap but hasn’t eliminated it.

Where ZK Proofs Meet Agentic AI

The intersection of zero-knowledge proofs and agentic AI opens possibilities that neither technology enables alone:

Agent-to-Agent Authentication

When AI agents interact, they need to verify each other’s capabilities and authorizations. An agent could prove it’s authorized to access certain data, that its operation falls within specified parameters, or that it meets the requirements set by the receiving system—all without revealing the underlying credentials or system architecture.

Verifiable Agent Reasoning

One of the challenges with AI agents is understanding why they made certain decisions. ZK proofs could allow an agent to prove its reasoning followed certain rules or constraints without exposing its full reasoning chain, protecting proprietary models while enabling accountability.

Privacy-Preserving Collaboration

Multiple AI agents working together often need to share information selectively. A medical AI agent could prove that a patient meets defined eligibility criteria without revealing their complete medical history. A financial AI agent could prove that a transaction falls within approved limits without exposing full account details.

Audit Without Surveillance

Regulators and compliance systems need to verify AI agents operate within bounds, but constant surveillance creates privacy and competitive concerns. ZK proofs enable agents to generate audit trails that support compliance audits without exposing operational details.

Real-World Adoption: DIDs, VCs, and Beyond

Some Verifiable Credentials (VCs) and Decentralized Identifiers (DIDs) already leverage ZK proofs in production environments. Standardized credential frameworks and digital identity wallet initiatives are enabling selective disclosure—proving “I’m over 18” or “I hold certification X” without exposing full identity documents. Agentic commerce frameworks are now exploring VCs as the trust substrate for agent-to-agent transactions.

On an emerging frontier, ZK circuits are being developed that allow model creators to prove their training data was used under selected licensing or data-governance requirements—without revealing the dataset itself. As regulators increase scrutiny of AI training practices, this capability becomes a potential differentiator.

Current Limitations

Honest assessment is essential. Several constraints limit immediate deployment:

-

Tooling fragmentation: Proofs generated in one system (Circom) may not readily verify in another (Noir) without translation. Portability across agentic platforms—where Agent A’s proof must verify on Agent B’s stack—remains immature.

-

Blockchain dependency: Many of the most mature ZK implementations emerged from blockchain infrastructure (Ethereum L2s, Zcash, Mina). Enterprise tooling outside crypto is maturing but early-stage.

-

Computational overhead: Proof generation remains resource-intensive. Better suited for high-value, asynchronous verification than real-time decision loops.

-

Standards gap: There is not yet a broadly adopted standard for ZK-based trust in AI agent interactions. W3C’s DID and Verifiable Credentials specs provide the most mature foundation—already referenced by governments (EU eIDAS 2.0) and enterprises. The Decentralized Identity Foundation (DIF) and Internet Identity Workshop (IIW) are convening efforts, but agent-to-agent trust protocols remain undefined.

Looking Forward

The trust infrastructure for AI agents is still catching up to their capabilities. Zero-knowledge proofs represent one promising direction—offering a mechanism to establish verifiable trust without requiring full disclosure of underlying data.

Early convergence is visible. ZK-based identity frameworks are being explored as a way for agents to assert credentials selectively. Verifiable computation approaches could allow an agent to demonstrate what code it ran and on what inputs—shifting the basis of trust from assertion to proof. Standards work is beginning to examine how these tools might support compliant AI operations across different regulatory contexts.

Whether and how quickly these approaches are adopted remains an open question. But the underlying cryptographic primitives are well-studied, and the problems they address are real.

The cave example showed how to prove you know a password without revealing it. The agentic AI era presents opportunity to scale that principle to everything agents do: proving authorization, proving compliance, proving correctness—all without disclosure.

What began as an elegant mathematical curiosity in 1985 may become part of the trust infrastructure for a world where autonomous agents act on our behalf. An idea that once seemed like magic may prove increasingly practical as autonomous systems take on more responsibility.

About the Author